Technical SEO Guide for NSFW AI Companions

This guide explains the most common technical SEO challenges faced by NSFW AI companion platforms, including indexing issues, rendering problems, and crawlability barriers. It provides practical, experience-based solutions to improve visibility, structure, and long-term organic growth.

Published Date: April 7, 2026

At SEO Circular, we have worked with 15+ globally popular AI companion chatbots, including platforms like SugarLab AI, CandyAI, and MyDreamCompanion, and based on our real implementation experience, we have identified and fixed many common, technical, and even some very unusual SEO issues that most developers and founders do not notice until their website stops performing in search.

Over time, we have seen that AI companion platforms are growing rapidly and will continue to stay in demand beyond [year], because user behavior clearly shows strong adoption of personalized AI interaction across the US, Europe, and emerging markets, where people are actively engaging with these platforms for real-time and customized digital companionship.

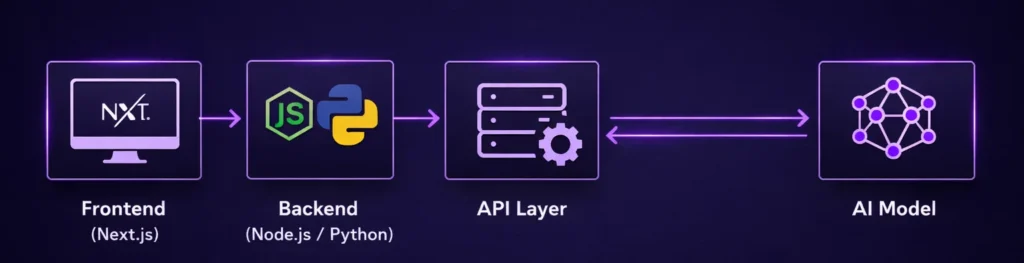

The most important thing to understand here is that building an AI companion platform today requires a modern and complex tech stack that usually includes

- AI models (LLMs or fine-tuned systems)

- API-driven response layers

- Backend systems like Node.js or Python

- Frontend frameworks such as Next.js

- Rendering approaches like Server-Side Rendering (SSR), Client-Side Rendering (CSR), or hybrid setups

While this stack is powerful from a product and engineering point of view because it supports real-time responses, scalability, and a smooth user experience, it also creates serious technical SEO challenges that are very different from those of traditional websites.

Because of this complexity, we have seen many cases where platforms had a strong product, active users, and good engagement, but still failed to generate organic traffic simply because search engines were not able to properly crawl, render, or index their content.

So in this guide, we are going to explain everything in detail based on real project experience, covering the technical SEO issues that affect AI companion platforms and the practical ways to fix them, but before you continue, one thing is important to keep in mind: this is not a basic SEO article, and it demands patience, because we are going deep into real technical problems and their solutions.

Fix Your AI Companion SEO Before It Kills Your Growth

If your AI platform is not getting indexed or ranking despite having a strong product, then the issue is not your idea but your technical SEO. At SEO Circular, we have already fixed these problems for 15+ global AI companion platforms, and we can do the same for you with a clear, result-driven strategy.

Get Your AI Companion SEO Strategy Today

Modern AI Companion Platforms Use Complex Tech Stacks

- AI companion platforms look perfect for users, but search engines often receive empty or incomplete HTML, which directly impacts indexing and ranking.

- Heavy reliance on JavaScript, APIs, and dynamic rendering makes it difficult for Googlebot to access and understand real content during the first crawl.

- Issues like BAILOUT_TO_CLIENT_SIDE_RENDERING, JS chunk loading, and streaming responses delay or block content visibility for search engines.

- Dynamic and session-based AI content does not create stable, indexable pages, which weakens keyword targeting and SEO structure.

- From Googlebot’s perspective, many pages appear as “Loading page…” without keywords, structure, or meaningful signals required for ranking.

- Without fixing rendering, content delivery, and crawlability, even strong AI platforms fail to generate organic traffic and remain dependent on paid acquisition.

What Makes NSFW AI Companion Websites SEO-Difficult?

Dynamic AI-Generated Content Creates Indexing Gaps

In AI companion platforms, most of the content is generated based on user interaction, which means there is no fixed or pre-defined content available for search engines to crawl and understand.

- Content is generated after user input : This means pages do not have static text that can be indexed during the initial crawl, and search engines may not wait for interaction-based content.

- Responses vary for each user : Since AI replies are personalized, there is no consistent version of content that can be indexed and ranked.

- No stable keyword targeting : Because content changes dynamically, it becomes difficult to optimize pages for specific keywords in a controlled way.

Login Walls and Session-Based Content Block Crawling

Most AI companion platforms require users to log in before accessing core features, and this creates a major barrier for search engines. Important pages are behind authentication. Search engines cannot access content that requires login, which means valuable pages remain invisible.

Heavy JavaScript Dependency Reduces Visibility

Modern frameworks rely heavily on JavaScript, and while this improves user experience, it creates challenges for search engines that depend on initial HTML.

- Content loads after JavaScript execution

If rendering happens on the client side, search engines may not see full content immediately.

- Delayed rendering affects indexing

Even if Google eventually renders JavaScript, delays can reduce crawl efficiency and indexing priority.

- Resource-heavy pages impact crawl budget

Large JS files and API calls consume more resources, which can limit how many pages get crawled.

Restricted and Sensitive Content Handling (NSFW Factor)

NSFW AI companion platforms face an additional layer of complexity because content must be controlled and compliant while still being indexable.

Age Gate Blocking Crawlers (Wrong Implementation)

AI companion platforms, especially NSFW ones, often implement age verification systems, but as per our experience, many websites implement this in a way that blocks search engines completely instead of just controlling user access.

- Full-screen age gate blocks HTML content

When age validation is implemented as a blocking layer before page load, search engines are unable to access the actual page content, which results in zero indexable data.

- JavaScript-based age verification hides content

If the main content is loaded only after age confirmation via JavaScript, then crawlers may never see the actual content because they do not interact like real users.

- Incorrect bot handling leads to deindexing

When bots are treated the same as users without a fallback mechanism, important pages fail to get indexed or lose rankings over time.

Correct SEO Approach for Age Gate

Age gate should be implemented in a way where HTML content is still accessible to crawlers, using techniques like server-side rendering, conditional rendering, or bot-friendly fallbacks while still maintaining compliance.

Too Many Similar Character Pages Create Low-Quality Index

We saw many AI companion platforms allow users or admins to create unlimited character profiles, but over time this leads to thousands of similar pages with very little differentiation, and this directly affects SEO quality because search engines start treating the site as low-value or spam-like.

- Multiple pages with similar intent and structure

When character pages follow the same template with minor changes like name, image, or personality, search engines see them as near-duplicate content instead of unique value pages.

- Thin content with no real informational depth

Most character pages do not contain enough structured or meaningful content, which reduces their chances of ranking and increases the risk of being ignored or deindexed.

- Crawl budget gets wasted on low-value pages

Instead of focusing on important pages, search engines spend time crawling thousands of similar URLs, which affects indexing efficiency of high-priority content.

- Overall domain quality signal gets impacted

When a large portion of the website contains low-value or duplicate-like pages, it can reduce trust and authority in the eyes of search engines over time.

This Correct approach are as below

If you are planning to create large-scale character pages, then you should control indexing by blocking unnecessary pages using robots.txt and meta noindex tags, so only high-quality and important pages are indexed.

How to Control Indexing Using robots.txt

You can prevent search engines from crawling low-value character pages by disallowing specific URL patterns in your robots.txt file.

User-agent: *

Disallow: /characters/

Disallow: /ai-characters/

Disallow: /profile/

This ensures that crawlers do not waste time accessing bulk-generated pages that are not useful for SEO.

How to Use Meta Noindex for Character Pages

In cases where pages should be accessible but not indexed, you can use the noindex meta tag inside the page head section.

<meta name=”robots” content=”noindex, follow”>

This allows search engines to:

- crawl the page

- follow links inside the page

- but avoid adding it to search results

Next.js & Rendering Deep Dive: Why Most AI Companion Websites Fail in SEO

As per our experience at SEO Circular, most AI companion platforms today are built using modern frameworks like Next.js, and while these frameworks are excellent for performance and user experience, they can silently break your SEO if rendering is not handled correctly from the beginning.

The biggest mistake we have seen is that developers optimize for speed and interactivity, but ignore how HTML is delivered to search engines, and this is where issues like non-indexing, partial indexing, or delayed indexing start appearing.

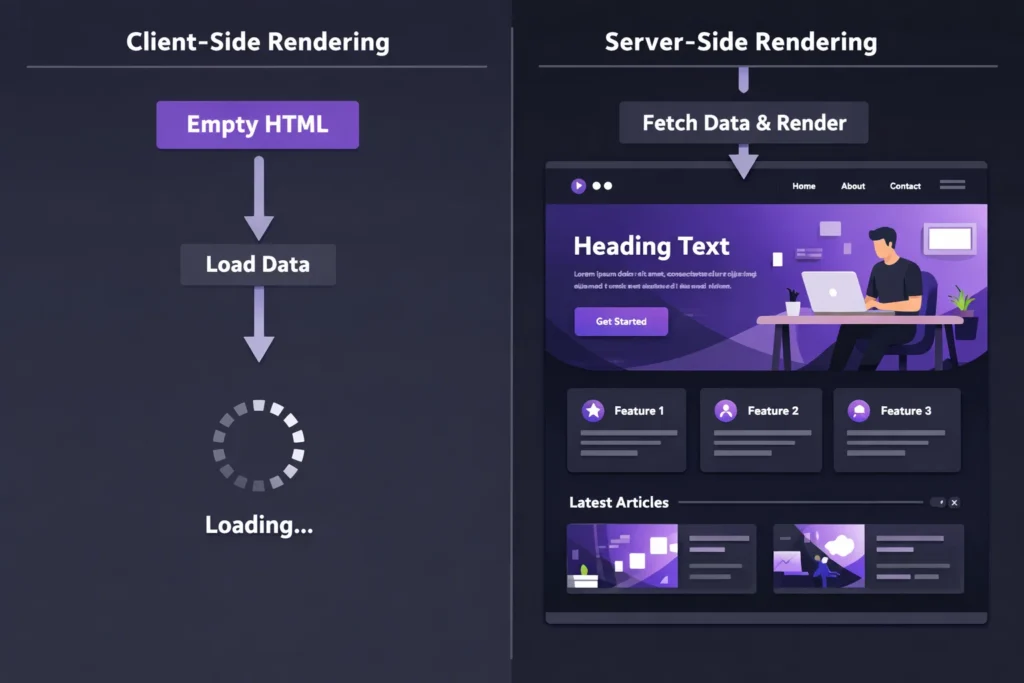

Client-Side Rendering (CSR) — The Root Cause of Indexing Failures

In many AI companion platforms, developers rely heavily on client-side rendering because it makes the application feel fast and interactive, but this approach creates serious problems for search engines.

- Initial HTML response is empty or minimal

When a page is loaded, the server sends almost no content, and the actual data is fetched later through JavaScript, which means search engines do not immediately see meaningful content.

- Content depends on JavaScript execution

If Googlebot does not fully execute JavaScript or delays rendering, then important content may never be indexed.

- Common Next.js issue: BAILOUT_TO_CLIENT_SIDE_RENDERING

This happens when the framework falls back to CSR, and instead of sending pre-rendered HTML, it pushes rendering to the browser.

JavaScript Chunk-Based Rendering (__next_f.push Issue)

Another issue we have frequently observed is content being delivered via JavaScript chunks instead of being embedded in HTML.

- Data is injected using __next_f.push

Instead of having full HTML, the content is dynamically pushed through JS chunks, which delays visibility for crawlers.

- HTML does not contain actual content

Search engines rely on initial HTML for indexing, and if content is missing there, indexing becomes unreliable.

- Rendering depends on hydration

Until hydration completes, the page does not contain usable content, which creates a gap between user view and crawler view.

👉 Result:

Search engines may partially index or completely skip such pages.

Server-Side Rendering (SSR) — The Correct Foundation for SEO

From an SEO perspective, server-side rendering is one of the most reliable approaches because it ensures that content is available in the initial HTML response.

- Full HTML is sent from the server

This allows search engines to immediately read and index the content without waiting for JavaScript execution.

- Faster indexing and better crawlability

Since content is already present, Google can process pages efficiently.

- Ideal for landing pages and SEO content

Pages that target keywords should always use SSR to ensure maximum visibility.

👉 Example (Next.js SSR):

export async function getServerSideProps() {

const data = await fetch(‘https://api.example.com/data’).then(res => res.json());

return {

props: { data },

};

}

This ensures that content is rendered on the server before being sent to the browser.

Incremental Static Regeneration (ISR) — Best Balance for AI Platforms

In many cases, AI platforms need both performance and SEO, and this is where ISR becomes very powerful.

- Pages are pre-rendered and updated periodically

This allows you to serve static HTML while still keeping content fresh.

- Reduces server load

Unlike SSR, ISR does not require rendering on every request.

- Works well for programmatic SEO pages

Character pages, landing pages, and category pages can benefit from ISR if structured correctly.

👉 Example (Next.js ISR):

export async function getStaticProps() {

const data = await fetch(‘https://api.example.com/data’).then(res => res.json());

return {

props: { data },

revalidate: 60, // Re-generate page every 60 seconds

};

}

Streaming & Partial Rendering — Hidden SEO Risk

Modern Next.js versions support streaming and partial rendering, which improves performance but introduces SEO risks if not handled carefully.

- Content loads in parts instead of full HTML

Search engines may capture only the initial part of the page.

- Important content may be delayed

If key sections load later, they may not be indexed properly.

- Requires careful prioritization

Critical SEO content should always be included in the first HTML response.

Best Rendering Strategy for AI Companion Platforms

As per our experience at SEO Circular, the most effective approach is not choosing one rendering method, but combining them strategically.

- Use SSR for SEO landing pages

Ensure all important pages are fully indexable.

- Use ISR for scalable content pages

Handle large volumes of pages efficiently.

- Avoid CSR for indexable content

Keep CSR limited to app-level interactions only.

- Separate app and SEO layers

Your chatbot interface and SEO pages should not depend on the same rendering logic.

How to Fix Indexing Issues in AI Companion Platforms (Step-by-Step)

As per our experience at SEO Circular, most indexing issues in AI companion platforms are not because of Google, but because of how content is delivered, and once you fix rendering and structure properly, indexing improves much faster than expected.

Use Server-Side Rendering for Important Pages

If your key pages are not rendered on the server, then search engines will struggle to understand them, so always ensure that SEO-focused pages return proper HTML in the initial response.

- Convert landing pages to SSR

This ensures that content is visible to crawlers without waiting for JavaScript execution.

- Avoid CSR for indexable URLs

Keep client-side rendering limited to app interactions, not SEO pages.

Create Static SEO Pages Separate from Chat App

AI chat interfaces are not designed for indexing, so you should not rely on them for SEO, and instead create dedicated pages that target search queries.

- Build landing pages for each use case

These pages should explain features, scenarios, and benefits in a structured way.

- Do not index chat session URLs

Since they are dynamic and user-specific, they should be excluded from search results.

Ensure Content Exists in Initial HTML Response

Search engines depend on the first HTML response, so if your content is not present there, indexing will fail.

- Avoid loading critical content via API after page load

Important text should already be part of the HTML.

- Test pages without JavaScript

If content is not visible without JS, then Google may also struggle to see it.

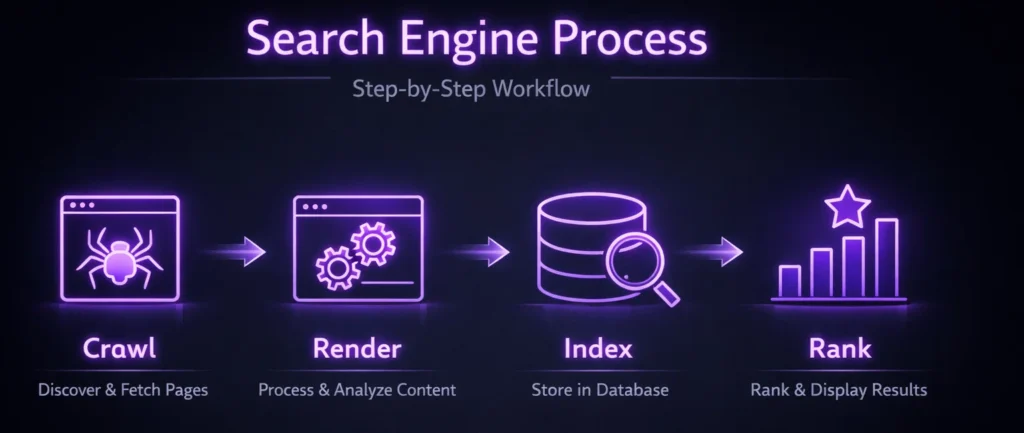

Optimize Sitemap and Crawl Flow

Search engines need clear signals to discover and prioritize pages, so your sitemap and internal linking should guide them properly.

- Include only indexable pages in sitemap

Avoid adding low-value or blocked URLs.

- Use internal linking to highlight important pages

This improves crawl efficiency and page importance.

Control Low-Quality Pages Properly

If your platform generates large-scale pages like character profiles, then controlling their indexing is critical to maintain SEO quality.

- Use robots.txt to block unnecessary crawling

This saves crawl budget for important pages.

- Apply noindex on low-value pages

This prevents them from appearing in search results.

Handle Age Gate and Restrictions Smartly

Compliance is important, but it should not block search engines completely, so implementation must balance both.

- Ensure bots can access content

Do not fully block HTML behind age validation.

- Use bot-friendly rendering approach

Keep content visible while controlling user access separately.

Monitor Indexing Using Search Console

Fixing issues is not enough, you also need to track whether Google is actually indexing your pages.

- Check “Crawled but not indexed” issues

This helps identify content visibility problems.

- Monitor coverage and indexing trends

This shows whether fixes are working or not.

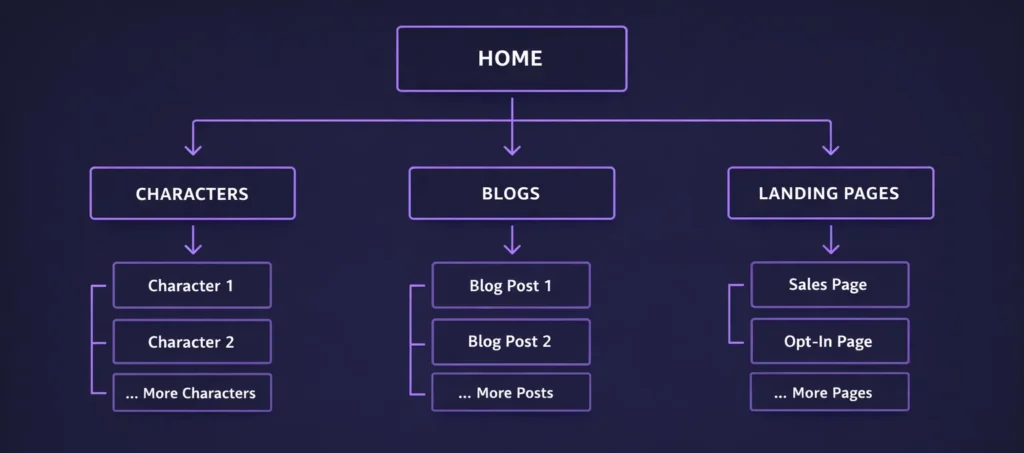

Site Architecture for AI Companion SEO (How to Structure Your Platform for Ranking)

In most AI platforms, everything is built around the app interface, but that approach fails in SEO because search engines cannot navigate dynamic systems easily, so you need to create a separate SEO-friendly architecture layer that organizes your content into clear categories.

Recommended URL Structure (SEO-Friendly)

Your URL structure should clearly reflect hierarchy so that both users and search engines can understand the relationship between pages.

/image-generator/

/image-generator/nsfw-ai-images/

/characters/

/characters/anime-ai-girlfriend/

/blogs/

/blogs/technical-seo-ai-platforms/

/landing/ai-companion-app/

/chatbots/ai-roleplay-chat/

This structure ensures that:

- categories build topical authority

- pages are grouped logically

- crawl paths are clear and efficient

Separate App Layer from SEO Layer

One critical thing we always implement is separating the actual product interface from SEO pages, because mixing both creates indexing issues.

- Keep chat interface under app routes

Example: /app/chat or /dashboard

- Do not rely on app pages for SEO

These are user-specific and not designed for indexing.

- Build dedicated SEO pages outside app flow

These pages should be crawlable, static or server-rendered, and optimized for search.

Internal Linking Strategy for Better Crawlability

Search engines depend on internal links to discover and prioritize pages, so linking should not be random but structured.

- Link categories to subpages

This helps search engines understand hierarchy.

- Connect blogs to landing pages

This improves conversion flow and keyword relevance.

- Avoid orphan pages

Every important page should be reachable within 2–3 clicks.

Conclusion

AI companion platforms are built on powerful and modern technologies, but as we have seen across multiple real-world projects at SEO Circular, the same tech stack that delivers a great user experience can silently break your SEO if rendering, content delivery, and crawlability are not handled correctly from the beginning.

- Technical SEO in AI platforms is not optional, because search engines need structured, visible, and consistent content to understand and rank your website.

- Issues like client-side rendering, JavaScript-based content loading, and dynamic responses can prevent Google from accessing real content, even if users see everything perfectly.

- Uncontrolled scaling, such as thousands of similar character pages or blocked content through login and age gates, can weaken overall domain quality and indexing efficiency.

- Proper implementation of SSR, ISR, structured site architecture, and controlled indexing can completely change how your platform performs in search results.

- Most importantly, SEO and development must work together, because technical decisions directly impact visibility, rankings, and long-term growth.

As per our experience, once these core technical issues are fixed, AI companion platforms not only start getting indexed properly but also build sustainable organic traffic that reduces dependency on paid acquisition and improves overall growth efficiency.

FAQs: Technical SEO for NSFW AI Companion Platforms

Recovery time depends on the severity of the issue and how quickly fixes are implemented. In most cases, once rendering and crawlability problems are resolved, initial improvements can be seen within 2–4 weeks, while full recovery may take 2–3 months.

Yes, but only if you create a controlled layer of static or semi-static content. Dynamic responses alone are not reliable for SEO, so you need structured landing pages that target specific keywords and intents.

The biggest mistake is prioritizing product functionality over crawlability, especially relying heavily on client-side rendering without considering how search engines process content.

Page speed is important, but not at the cost of rendering quality. A fast-loading page with no indexable content is worse than a slightly slower page with proper HTML content.

A hybrid approach works best. High-value pages should be manually optimized, while scalable pages can be generated programmatically with strict quality controls.

Table of Content

- Modern AI Companion Platforms Use Complex Tech Stacks

- What Makes NSFW AI Companion Websites SEO-Difficult?

- Correct SEO Approach for Age Gate

- How to Control Indexing Using robots.txt

- Next.js & Rendering Deep Dive: Why Most AI Companion Websites…

- How to Fix Indexing Issues in AI Companion…

- Site Architecture for AI Companion SEO (How to…

- Conclusion

- FAQs: Technical SEO for NSFW AI Companion Platforms