Top Python Libraries for Technical SEO Automation 2026

Discover the best Python libraries for SEO automation in 2026. Learn how tools like BeautifulSoup, Pandas, and Advertools help automate audits, keyword analysis, and reporting at scale.

Published Date: March 23, 2026

Search engine optimization has never functioned at a faster rate and manual workflows are no longer keeping up. In 2026, the businesses pulling ahead in organic search aren’t simply hiring more SEO talent, they’re deploying Python-driven automation to do the work that used to take entire teams hours each week.

Python has firmly established itself as the language of choice for technical SEO. With a massive ecosystem of purpose-built libraries, it now handles everything from crawling millions of URLs and parsing structured data to monitoring and keeping track keyword shifts in real time and automating on-page audits at scale.

The result? Faster insights, lower operational costs and a measurable competitive edge.

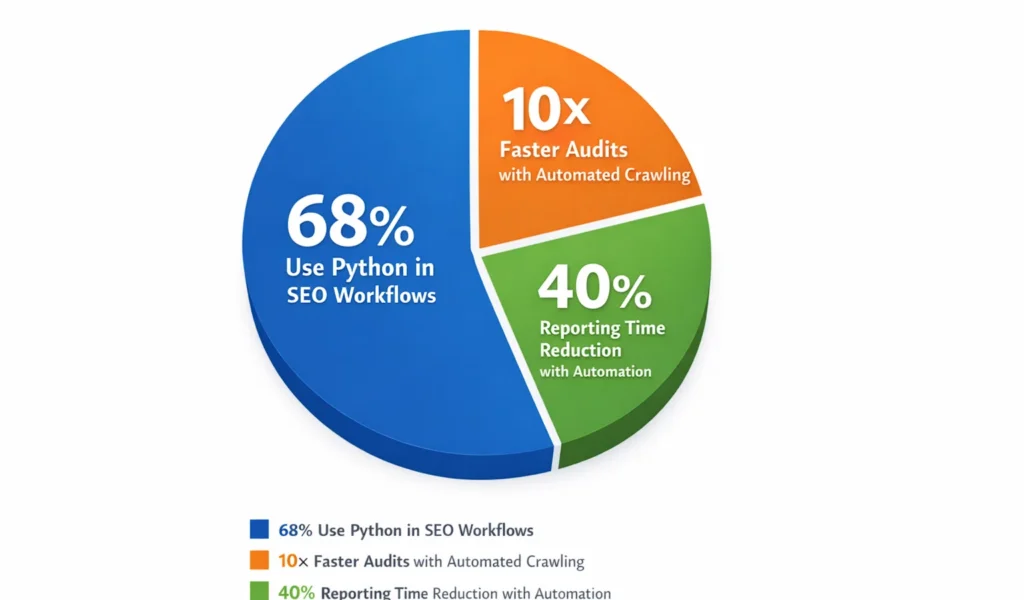

- 68% of enterprise SEO teams use Python in their workflows

- 10× faster audits with automated crawling vs manual review

- 40% reduction in reporting time reported by automation adopters

In this guide, the SEO Circular team breaks down the most powerful Python libraries which are available today, the ones that are actively shaping how forward-thinking business owners and their marketing teams automate repetitive SEO tasks, surface actionable data faster and build scalable search strategies without ballooning their headcount.

Whether you’re just beginning to explore Python for your SEO stack or looking to upgrade the tools you’re already using, this breakdown will give you a precise, data-informed view of what each library does, where it excels and which scenarios it’s built for.

Key Takeaways

- Python’s ecosystem in 2026 is purpose-built for SEO — not just adapted for it.

- Always define your SEO task first, let the task choose the library, not the other way around.

- BeautifulSoup and Requests are the smartest entry points for teams new to SEO automation.

- Pandas and Advertools together cover the bulk of any technical SEO audit workflow.

- spaCy-driven NLP is no longer optional for businesses competing in content-heavy verticals.

Scale Your SEO with Python Automation

At SEO Circular, we help businesses automate technical SEO, reporting, and data workflows using Python-driven systems that reduce manual effort and deliver faster, more accurate insights.

Partner with us to build powerful automation workflows.

Python Vs. Other Automation Options

Business owners are often presented with alternatives such as dedicated SEO platform, Google sheets macros or no-code tools like Zapier. here is how Python compares across the dimensions that matter most at scale.

| Capability | Python | SEO Platforms | No-Code Tools | Spreadsheets |

|---|---|---|---|---|

| Custom Crawling at Scale | Full control | Limited | Not supported | Not supported |

| API Data Orchestration | Unlimited | Platform APIs only | Connector-dependent | Manual only |

| Log File Analysis | Native | Some tools | Not supported | Limited rows |

| Cost at Scale | Low (open source) | High subscription | Mid-tier | Low |

| Reusable & Version-Controlled | Yes | No | Partial | No |

| ML & NLP Integration | Native | Rarely | Not supported | Not supported |

Parts Where Python Delivers The biggest ROI In SEO

Not every SEO task benefits equally from Python automation. The highest impact applications where the time and cost savings are most measurable fall into these categories:

Technical Site Auditing

Crawl millions of pages, identify broken links, redirect chains, duplicate content and missing metadata across your entire domain in minutes.

Keyword Clustering & SERP Analysis

Group thousands of keywords by intent, analyse SERP feature distribution and identify content gap opportunities automatically at a scale no analyst can match manually.

Log File Analysis

Parse server logs to understand how Googlebot is crawling your site revealing wasted crawl budget, overlooked pages and indexation bottlenecks invisible to standard tools.

Automated Reporting Pipelines

Pull data from Google Search Console, Analytics and third-party APIs into a single automated dashboard, eliminating hours of weekly manual reporting.

Content & NLP Optimisation

Use natural language processing libraries to analyse content at scale – scoring topical depth, entity coverage and semantic alignment against top-ranking pages.

The business case is clear: Python does not replace SEO expertise – it amplifies it.

The right library in the right workflow can turn a one-person operation into one that performs like a team of five, without the overhead. The following sections break down exactly which libraries to use and when.

How To Choose The Right Library

Not every library matches every use case. Here’s a structured framework to match the right and suitable tool to your specific SEO goals without wasting time on trial and error.

With the large number of Python libraries available for SEO related tasks, one of the most common mistakes business owners and their teams make is reaching for the most popular tool rather than the most appropriate and suitable one. Choosing wrong means lost setup time, limited output or rebuilding workflows from scratch months later.

The right library comes down to five core criteria. Evaluate any candidate against each of these before committing.

1) Define Your SEO Task First

Crawling, keyword analysis, log parsing, and content auditing each demand different capabilities. Start with the task, not the tool.

2) Assess Your Team’s Python Depth

Some libraries require advanced scripting knowledge. Others are beginner-friendly with clear documentation. Match complexity to capability.

3) Check Scale Requirements

A library that handles 10,000 URLs may break at 1 million. Know your data volume before selecting a crawling or processing tool.

4) Verify Active Maintenance

Abandoned libraries accumulate compatibility issues fast. Check GitHub for recent commits, open issues, and community activity.

5) Consider Integration Needs

Will it connect to Google Search Console, your CMS, or your reporting stack? Compatibility with your existing tools matters significantly.

Match Your Goal To The Right Library Type

Use this decision guide to quickly identify which category of library fits your immediate SEO priority.

| Your SEO goal | Library category | Best for | Complexity |

| Crawl & audit site structure | Web crawling | Technical audits, broken links, redirect chains | Intermediate |

| Extract on-page data at scale | HTML parsing | Meta tags, headings, schema, structured data | Beginner |

| Analyse keyword & SERP data | API wrappers | Rank tracking, SERP features, search volume | Intermediate |

| Process server log files | Data processing | Crawl budget, bot behaviour, indexation gaps | Advanced |

| Optimise content with NLP | NLP / ML | Entity analysis, topical depth, semantic scoring | Advanced |

| Automate reporting pipelines | Data viz & reporting | Dashboards, scheduled exports, stakeholder reports | Intermediate |

A Common Mistake To Avoid:

Don’t select a library based on popularity only. `Scrapy` is powerful but overkill for simple page audits. `BeautifulSoup` is elegant for parsing but it can’t replace a full crawler. Choosing the right tool for the right task is what separates efficient SEO automation from messy, unorganized scripts.

Quick Check Before You Commit

1) Run through this checklist before finalising any library for your SEO stack:

2) Does it have active GitHub commits within the last 6 months?

3) Is there clear documentation with practical examples – not just API references?

4) Has it been tested at the data scale your workflows require?

5) Does it integrate cleanly with your existing tools like GSC, Analytics, CMS)?

6) Is the learning curve realistic for your team’s current skill level?

7) Can it handle your task without requiring heavy third-party dependencies?

Top Python Libraries For SEO Automation In 2026

Seven battle-tested libraries covering the full breadth of SEO automation — from crawling and parsing to trend analysis and natural language processing.

1) BeautifulSoup – HTML Parsing

BeautifulSoup reads the raw HTML of any web page and lets you filter out exactly what you need including title tags, meta description, heading structures, canonical URLs and internal links – with precision and very little setup. It is one of the most readable libraries in the Python ecosystem making it a natural starting point for teams new to SEO automation.

Best For:

On-page audits, meta tag extraction, internal link mapping.

Limitations:

Cannot fetch pages on its own – needs Requests or Selenium to retrieve HTML first.

Complexity:

Low – most tasks require fewer 10 lines of logic.

How it helps your SEO :

Feed it a list of URLs and it will extract every on-page SEO element across your entire site in minutes, flagging missing meta descriptions, duplicate titles or broken heading hierarchies automatically without opening a single page manually.

2) Requests – HTTP Client

Requests is the foundational building block that almost every other Python SEO tool relies on. It handles the act of fetching web pages and calling external APIs – including Google Search Console, Ahrefs and Semrush managing response codes, redirects, headers and authentication behind the scenes so your scripts can focus on the SEO logic.

Best for:

API integration, page fetching, HTTP status audits, redirect chain analysis.

Limitation:

Cannot render JavaScript – dynamically loaded content requires Selenium.

Complexity:

Low- Intuitive syntax, extensive documentation

How it helps your SEO:

Run automated HTTP status check across thousands of URLs to Instantly surface 404 errors, redirect chains and server errors – tasks that would take hours in a crawler tool but run in seconds with Requests-powered script.

3) Selenium — Browser Automation

Selenium controls a real browser like Chrome, Firefox or Edge programmatically. This makes it the right and correct tool whatever Javascript rendering matters for SEO: auditing what Googlebot actually sees versus what your server sends, testing Core Web vitals triggers or scraping content that only appears after user interaction or page load events.

Best For :

JS-rendered page audits, Core Web Vitals testing, dynamic content extraction.

Limitation :

Significantly slower and more resource-intensive than Requests at scale.

Complexity :

Medium – requires browser driver setup and handling async behaviour.

How it helps your SEO :

Verify that your JavaScript heavy page render correctly for search engines, catch lazy loaded content that crawlers miss and automate repetitive browser-based SEO tasks such as checking hreflang rendering or structured data on dynamic pages.

4) Advertools – SEO Toolkit

Advertools is the most SEO specific library in this list – built by a practitioner for practitioners. it handles sitemap, robots.txt analysis, web crawling, server log processing and keyword manipulation all within a single, coherent package. For teams running technical SEO at scale, it removes the need to stitch together multiple libraries for the most common audit tasks.

Best For :

Sitemaps, robots.txt audits, crawling, log file analysis, keyword tools.

Limitation:

Smaller community and fewer third party integrations than general purpose libraries.

Complexity:

Medium – SEO specific functions are well documented with clear outputs.

How it helps your SEO:

Parse your entire sitemap in seconds, audit your robots.txt for crawl blocking errors and process server log files to understand exactly how Googlebot is spending its crawl budget across your domain – all without building custom tooling from scratch.

5. Pandas – Data Processing

Pandas is the data processing layer that sits underneath nearly every SEO automation pipeline. It handles bulk manipulation of crawl exports, keyword lists, Google Search Console data, rank tracking CSVs and log files – enabling filtering, grouping deduplication, merging and reporting at a scale and speed that spreadsheet tools simple cannot support.

Best For:

GSC analysis, Crawl data processing, keyword deduplication, automated reporting.

Limitation:

Memory intensive with very large datasets – consider polars for 10M=rows.

Complexity:

Medium – data frame concepts require an initial learning curve.

How it helps your SEO:

Load a full Google Search Console export and identify keyword cannibalisation, declining queries and click through rate anomalies across thousands of pages – in the time it would take to manually scroll through a spreadsheet.

6. Pytrends – Trend analysis

Pytrends is an unofficial Python wrapper for Google Trends, bringing real time and historical search interest data directly into your automation workflows. It lets you track how interest in specific keywords evolves over time, compare seasonal demand patterns and identify which geographies are driving search volume – all programmatically and at scale.

Best For:

Content timing, seasonal planning, GEO demand analysis, trend monitoring.

Limitation:

Unoffcial API with rate limits not suitable for continuous or high frequency polling.

Complexity:

Low- minimal setup, straightforward data outputs.

How It helps your SEO:

Identify the exact weeks when search demand for your target topics peaks so your content team publishes ahead of the curve rather than behind it, giving your pages time to index and rank before competitor volume spikes.

7. NLTK/spaCy – Natural Language Processing

NLTK and spaCy bring natural language processing into your SEO stack. They understand text the way a search engine does – recognising named entities, measuring semantic relationship between words, scoring topical coverage and clustering keywords by intent. In 2026, as Google’s understanding of language continues to mature, NLP-driven content optimisation has become a measurable ranking differentiator in competitive verticals.

Best For:

Entity extraction, keyword clustering, content gap analysis, intent mapping.

Limitation:

Requires NLP understanding – model configuration adds meaningful complexity.

Complexity:

High-best suited for teams with data science capability.

How it helps your SEO:

Analyse your existing content and competitor pages to identify missing entities, understand subtopics and semantic gaps – then use those insights to build content that more completely satisfies search gaps – then use those insights to build content that more completely satisfies search intent and signals topical authority to Google.

Conclusion

Python has fundamentally changed what is possible in SEO and in 2026 that shift is accessible to businesses of all sizes. Libraries like BeautifulSoup, Requests, Advertools, Pandas, Selenium, Pytrends, and spaCy make it practical to automate the technical, repetitive, and data-heavy sides of search optimisation without ballooning your team.

That said, knowing which libraries exist is only the first step. Implementing them correctly requires technical and strategic expertise that goes well beyond the tools themselves. At SEO Circular, we combine Python-driven automation with deep search expertise to surface the insights that actually move rankings. If you are serious about scaling your SEO without scaling your overheads, our team is ready to help.

Commonly Asked Questions: Python Libraries For SEO Automation

Yes, an aggressive crawl script without rate limiting can overload your server, and bulk changes pushed without a review stage can introduce errors at scale. Always test scripts in a staging environment first, and ensure every automation includes error handling and logging before it touches your live site.

Not for every task. Libraries like BeautifulSoup and Pytrends are approachable for analytically minded SEO professionals. However, advanced workflows involving log file analysis, NLP, or custom crawling pipelines will require genuine programming competence to build and maintain reliably.

It depends on the method. Calling official APIs such as Google Search Console or Google Ads API is fully compliant. Scraping Google search result pages directly violates their terms of service and risks IP blocking. Always collect data through official APIs, not by scraping search results.

At minimum, quarterly. Algorithm updates, site structural changes, and library version upgrades can all break existing scripts or produce inaccurate outputs. Building in logging and alerts from the start significantly reduces ongoing maintenance costs.

No, Smaller sites benefit too primarily through eliminating repetitive manual tasks like weekly reporting, keyword trend monitoring, and periodic on-page audits. Starting with one well-chosen automation task delivers immediate value and builds your team’s confidence before expanding the stack.

Table of Content

- Key Takeaways

- Python Vs. Other Automation Options

- Parts Where Python Delivers The biggest ROI In SEO

- How To Choose The Right Library

- Match Your Goal To The Right Library Type

- A Common Mistake To Avoid:

- Quick Check Before You Commit

- Top Python Libraries For SEO Automation In 2026

- 7. NLTK/spaCy – Natural Language Processing

- Conclusion

- Commonly Asked Questions: Python Libraries For SEO Automation